When you measure anything, you rely on precision vs accuracy to determine whether your data is trustworthy and meaningful. These two concepts shape scientific research, engineering results, and everyday decision-making in the United States.

If you misunderstand them, you risk flawed conclusions, costly mistakes, and poor-quality outcomes, so you must grasp how they work together before you rely on any measurement. Keep reading to learn more about precision vs accuracy.

What Precision vs Accuracy Really Means

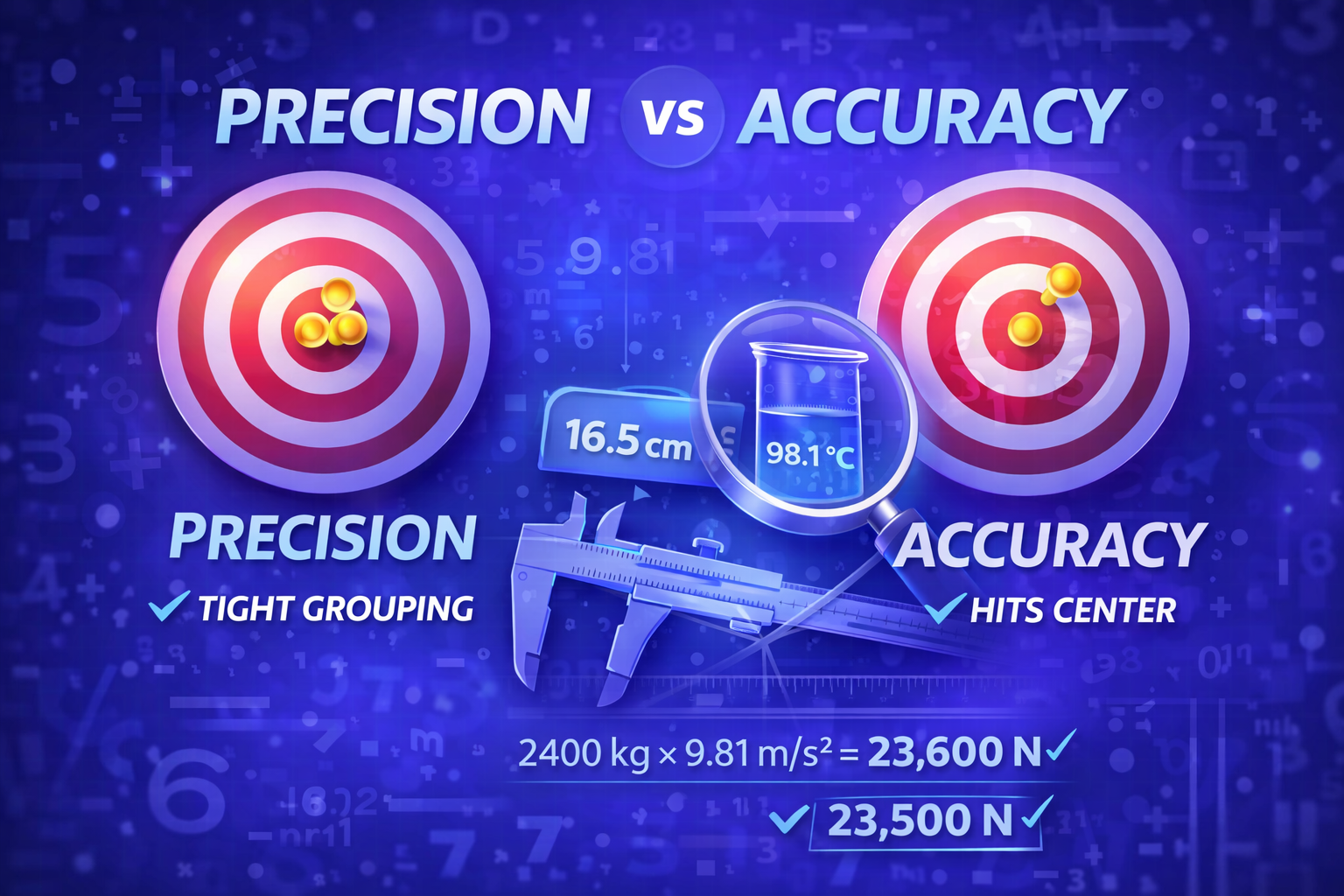

You often hear precision vs accuracy used interchangeably, but they describe two distinct measurement qualities. Accuracy refers to how close your measurement is to the true or accepted value, while precision refers to how consistent your repeated measurements are with one another. If you measure a known five-pound weight and record values close to five pounds, you are accurate, but if every reading clusters tightly whether correct or not, you are precise.

In scientific practice, you evaluate accuracy by comparing results to a standard reference or calibrated value. You evaluate precision by repeating measurements and examining variation between them, often using range or standard deviation. When you combine both qualities, you produce reliable data that supports strong decision-making and high-quality performance.

Why Precision vs Accuracy Matters in Science

In laboratories across the United States, measurement quality determines whether research findings stand or collapse. When you calibrate instruments against certified standards, you strengthen accuracy and reduce systematic error. When you repeat experiments under controlled conditions and calculate relative standard deviation, you strengthen precision and reduce random error.

According to industry measurement standards, even a small percentage error can significantly distort large-scale scientific results. For example, a 2 percent error in chemical concentration can alter reaction outcomes and compromise safety in pharmaceutical production. That is why you must evaluate both proximity to the true value and repeatability before trusting experimental data.

Real-World Examples You Can Understand

Imagine you are throwing darts at a target to visualize precision vs accuracy. If your darts cluster tightly but far from the bullseye, you demonstrate high precision and low accuracy. If your darts scatter around the bullseye with wide variation, you demonstrate moderate accuracy but low precision.

When your darts cluster tightly at the center, you achieve both high accuracy and high precision, which represents ideal measurement performance. When your darts scatter randomly and miss the center, you lack both qualities, which means your measurement system requires improvement. This simple visualization helps you quickly diagnose measurement weaknesses in any context.

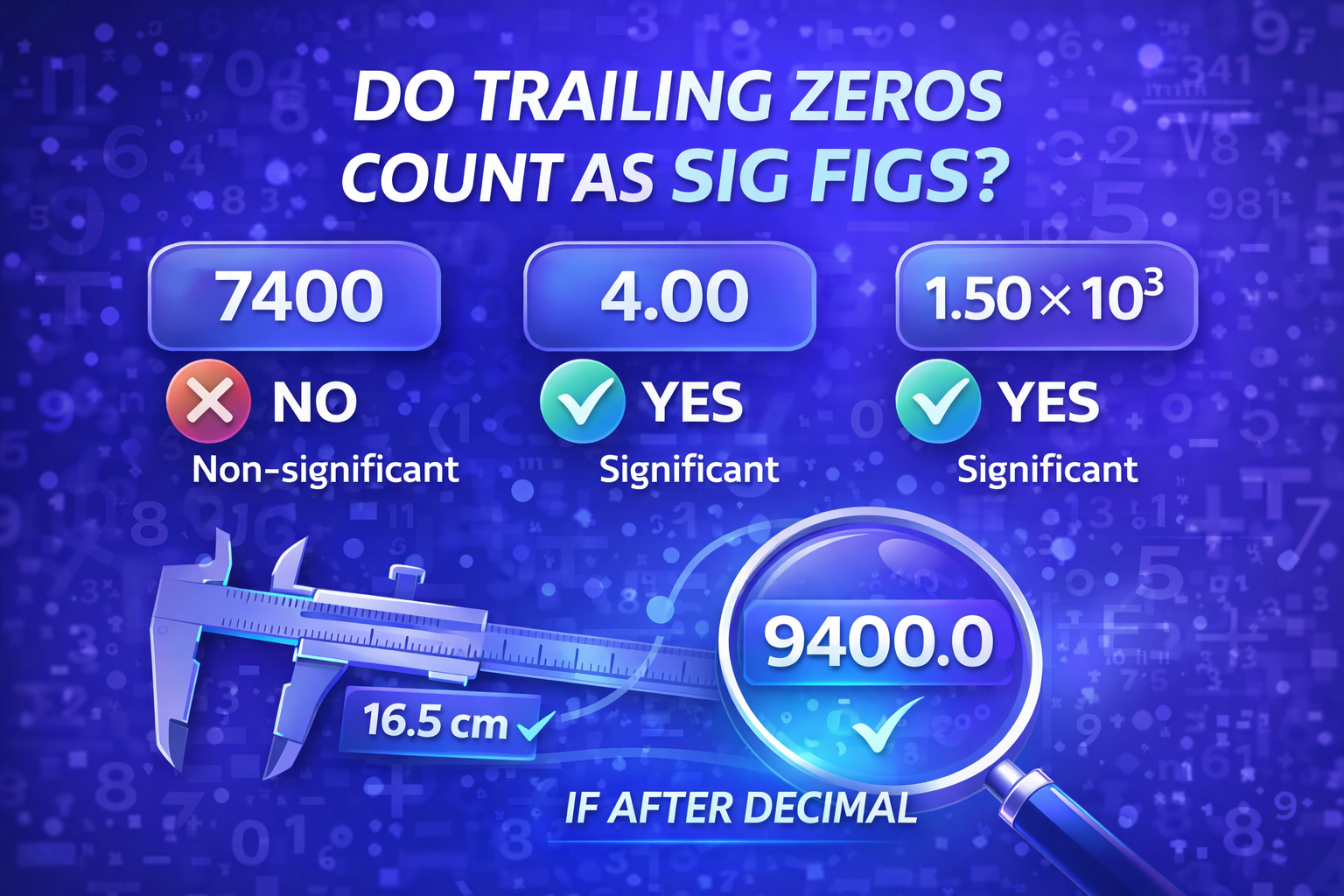

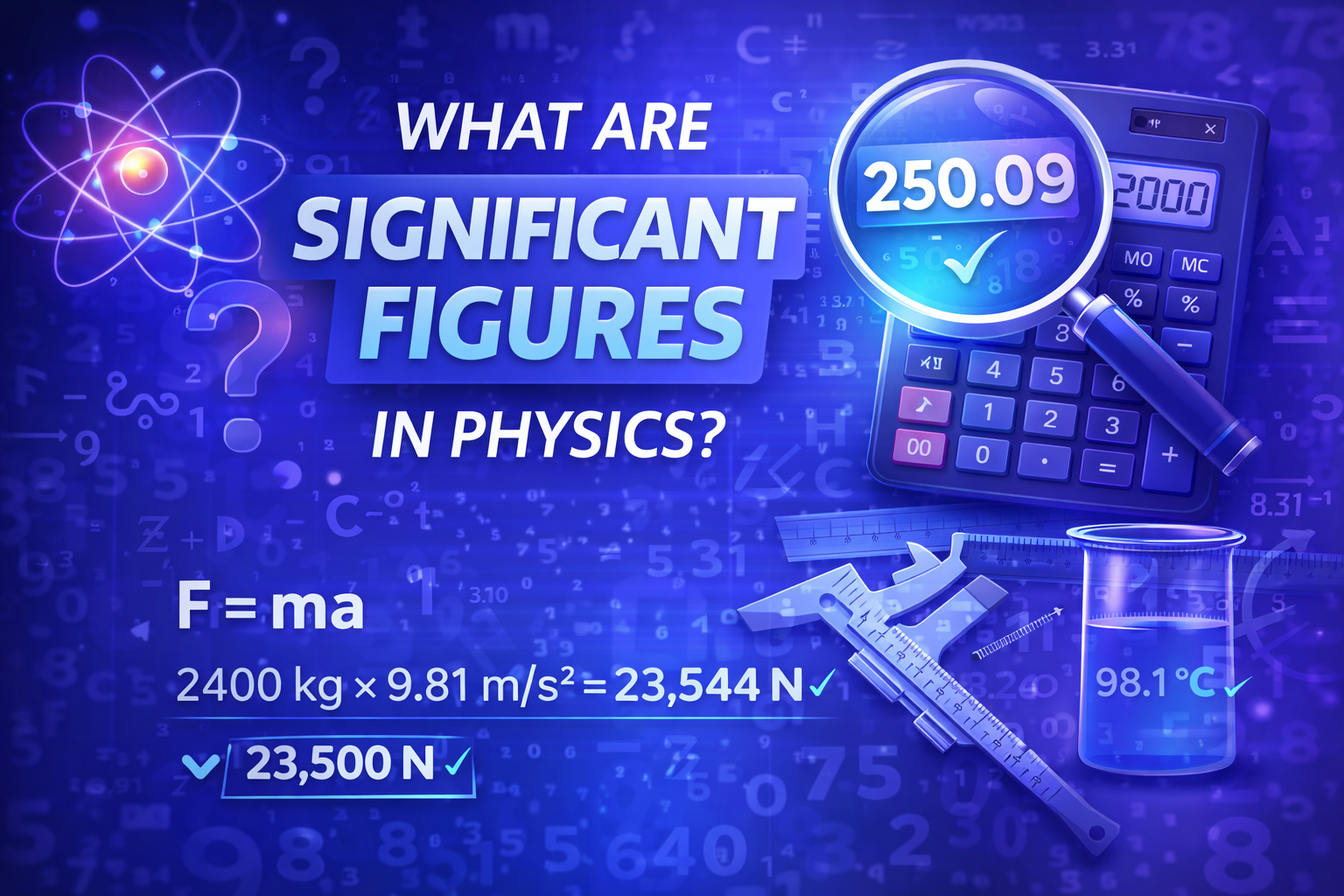

The Role of Significant Figures in Measurement

When you report numerical results, you communicate measurement quality through significant figures. Significant figures signal the level of precision supported by your measuring instrument and prevent overstating confidence in your data. If you report more digits than justified, you mislead readers about the reliability of your results.

To deepen your understanding of rounding and reporting rules, you can review the structured explanation provided in what are the rules for significant figures, which clarifies how digits reflect measurement certainty. When you align precision with correct rounding, you protect data integrity and maintain professional credibility. This practice strengthens scientific communication and prevents confusion in technical fields.

How Resolution Affects Precision vs Accuracy

Resolution describes the smallest change your instrument can detect, and it directly influences precision vs accuracy. If your scale only measures to the nearest whole number, you cannot claim fine-grained precision even if the result appears stable. Higher resolution allows you to capture smaller differences and refine both repeatability and closeness to the true value.

However, resolution alone does not guarantee accuracy because calibration remains essential. An instrument can measure tiny increments consistently yet still drift away from the true value over time. You must therefore combine high resolution with proper calibration and repeated testing to ensure dependable performance.

Measuring Precision Statistically

You strengthen your evaluation of precision by applying statistical tools rather than relying on visual judgment. Standard deviation measures how tightly repeated measurements cluster around the mean, and relative standard deviation expresses that variability as a percentage. In many industrial environments, a lower percentage indicates tighter control and improved repeatability.

For example, if ten repeated measurements vary within 0.1 percent of each other, you demonstrate high precision. If they vary by 5 percent, you likely face instability in equipment or technique. By tracking statistical variation, you transform abstract measurement theory into actionable data.

Evaluating Accuracy Through Calibration

You cannot determine accuracy without comparing your measurement to a trusted standard. Calibration aligns your instrument with a known reference value and corrects systematic bias that pushes results consistently above or below the true value. Without calibration, even highly precise results may remain consistently wrong.

In manufacturing and engineering, companies often conduct regular calibration cycles to maintain compliance with quality standards. A properly calibrated device ensures that each measurement reflects reality rather than instrument drift. When you combine calibration with repeated testing, you secure both dimensions of precision vs accuracy.

When You Need Precision More Than Accuracy

Certain situations require tight consistency even if absolute correctness is secondary. In large-scale production, you may prioritize uniformity to ensure components fit together consistently, even if the entire system has a minor offset. Precision reduces waste, supports high-performance output, and stabilizes operations.

However, you must still verify that your consistent measurements remain within acceptable tolerance ranges. If you operate outside tolerance, uniform error can still compromise product quality. Understanding this balance helps you allocate resources wisely and protect operational efficiency.

When Accuracy Is Non-Negotiable

In healthcare, aviation, and structural engineering, accuracy becomes critical because small deviations can create serious consequences. A medication dosage that deviates by even a few milligrams may affect patient safety. An engineering miscalculation in load-bearing structures can compromise long-term reliability.

In these contexts, you must verify proximity to the true value before considering consistency alone. Accuracy forms the foundation of safe and responsible measurement practices. When stakes are high, you must never rely on precision alone.

Practical Tools That Improve Measurement

You can strengthen both accuracy and precision by using digital tools that support correct rounding and reporting. When you calculate significant figures accurately, you avoid exaggerating precision and protect scientific transparency. A reliable Sig Fig Calculator simplifies this process and ensures your reported values align with accepted standards.

Beyond rounding, you must also understand why digit control matters in real experiments and engineering projects. If you want deeper insight into measurement discipline, reviewing why significant figures matter in science and engineering clarifies how numerical reporting influences credibility and safety. These resources support disciplined measurement habits without overstating confidence in your results.

Improving Your Measurement System

You can improve precision by stabilizing environmental conditions, refining technique, and maintaining equipment properly. You can improve accuracy by calibrating instruments regularly and comparing results against certified standards. When you combine both strategies, you create a dependable measurement system that supports confident decision-making.

Many quality-control programs in the United States integrate repeatability and reproducibility testing to evaluate instrument performance comprehensively. These methods identify variation between operators and equipment to ensure consistent outcomes. By systematically reviewing performance data, you elevate your measurement practices to professional standards.

Conclusion

When you understand precision vs accuracy, you gain control over the reliability of every measurement you take. Precision ensures consistency, accuracy ensures correctness, and together they create trustworthy data that drives informed decision-making.

By applying calibration, statistical analysis, proper resolution, and disciplined reporting through significant figures, you transform raw numbers into dependable knowledge that supports science, engineering, healthcare, and business across the United States.

FAQs

What is the difference between precision vs accuracy in simple terms?

Precision refers to how close repeated measurements are to each other, while accuracy refers to how close a measurement is to the true or accepted value. You can be precise without being accurate, and accurate without being consistently precise.

Can you have high precision but low accuracy?

Yes, you can achieve high precision but low accuracy when repeated measurements cluster tightly together yet remain far from the true value. This situation often indicates systematic error or poor calibration, even though the instrument produces consistent results each time.

Why is precision vs accuracy important in scientific research?

Precision vs accuracy directly affects the credibility of scientific findings because unreliable measurements lead to flawed conclusions. Accurate results ensure correctness, while precise measurements confirm repeatability, allowing researchers to trust experimental outcomes and confidently replicate studies.

How do you measure precision statistically?

You measure precision statistically by calculating standard deviation or relative standard deviation across repeated measurements. A smaller variation indicates higher precision, showing that results consistently cluster around the mean rather than fluctuating widely between trials.

How do you determine if a measurement is accurate?

You determine accuracy by comparing your measurement to a known or certified reference value through calibration. The closer your result is to the accepted true value, the more accurate it is, reducing systematic error in your data.

Does higher resolution always improve precision vs accuracy?

Higher resolution allows an instrument to detect smaller measurement changes, which can improve precision, but it does not guarantee accuracy. Without proper calibration, even high-resolution tools may consistently produce values that deviate from the true standard.

Why do significant figures matter in precision vs accuracy?

Significant figures communicate the level of certainty supported by a measurement and prevent overstating precision. Reporting too many digits implies unrealistic accuracy, while correct rounding reflects the instrument’s limitations and preserves scientific integrity.

In which industries is accuracy more critical than precision?

Accuracy is especially critical in healthcare, aviation, structural engineering, and pharmaceuticals where small deviations can create serious safety risks. In these fields, measurements must align closely with true values to protect lives and maintain regulatory compliance.

When is precision more important than absolute accuracy?

Precision becomes more important in manufacturing and large-scale production where consistency ensures parts fit and function properly. Even if there is a small offset from the true value, tight repeatability maintains product reliability and operational efficiency.

How can you improve both precision and accuracy in measurements?

You can improve precision by stabilizing environmental conditions and repeating tests under controlled procedures. You improve accuracy by calibrating instruments regularly against trusted standards, ensuring results remain both consistent and correctly aligned with true values.